Jump to the "libWebRTC" section if you are in a hurry and not interested in a general overview of the WebRTC space.

If you've missed Part 1, here it is: WebRTC 102: Demystifying ICE | Dyte

Introduction

Anyone who has even slightly dwelled into the world of real-time video-audio communication over the internet would have surely heard about WebRTC: The standard for ultra-low latency, real-time media communications over the Internet! You may have also tried out building a simple P2P application using RTCPeerConnection, or tinkered around with high-level libraries such as Mediasoup, Pion, Jitsi to name a few. But something is missing...... what really powers this new family of protocols? How does it work? If you're someone who is interested in getting into the nitty-gritty of things to do with WebRTC, this series is for you!

Why Should You Care About WebRTC?

As previously stated, WebRTC is the protocol that powers all of the magic behind the scenes. Understanding WebRTC will help you understand how to optimize for a wider range of environments, enable you to make low-level modifications to cater to your particular case, and so on.

So without further ado, let's get started!

A Small Refresher to the Current State of WebRTC

To understand why libWebRTC exists and why it is so important, we should start by looking at its grassroots in 2011 when Google first announced a shiny new open-source project for web browsers.

The project has since moved to a brand new website webrtc.org, and the source can now be found at https://webrtc.googlesource.com/src/. This repository contains the native WebRTC library used by most modern browsers (Chrome, Firefox, Opera, etc.).

Of course, browser vendors rarely use the EXACT same library and versions due to compatibility reasons, and differing release cycles. For example, here is Firefox's clone of libWebRTC on Github with their own modifications to the project.

The Actual WebRTC Standard

libWebRTC is amazing, and everything, but the real mission to maintain interoperability between multiple platforms, browsers, etc., is realized by two projects:

- IETF: IETF is an organization that maintains a collection of documents with various standards for real-time communication. These documents do not cover the actual WebRTC API, but rather, the different codecs, and protocols that are used by WebRTC clients to communicate with others. For example, ICE, STUN, and RTP.

- W3: W3 maintains the spec for the official WebRTC API which you commonly see in browsers (

RTCPeerConnection). All browsers are expected to comply with this specification to allow JavaScript clients to be cross-compatible. In actual practice, however, you would find that Chrome (or "Chromium") is by far the closest implementation of this spec and other projects currently implement a subset of the standard.

When we refer to WebRTC as a whole, we actually usually refer to these two specifications and not just chrome's WebRTC implementation.

Now that we have an idea of the WebRTC space let's dive deeper into libWebRTC!

libWebRTC

LibWebRTC is a C++/C native implementation of the WebRTC API which is compatible with Windows, MacOS, and Linux. On the mobile side of things, it also provides Java and Objective-C bindings for Android and iOS respectively.

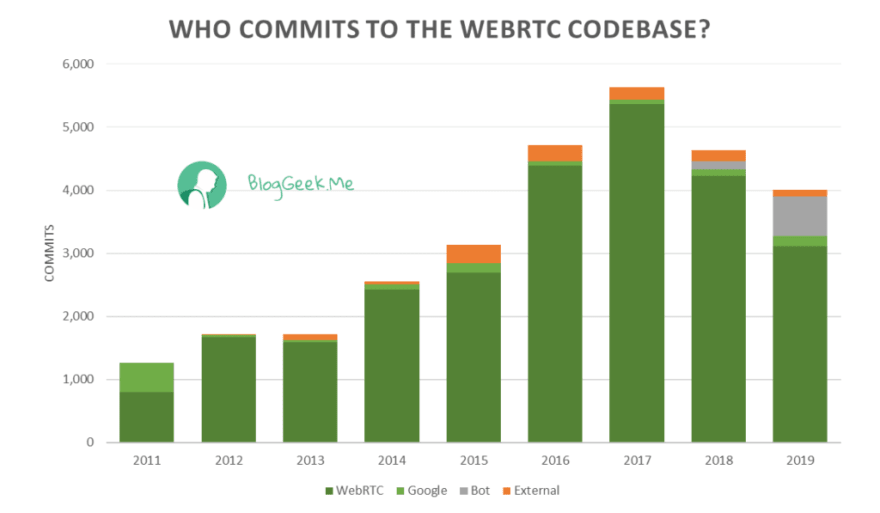

Source for image: BlogGeek.me

Yes, the project is open source, but Google and other browsers (Firefox, Opera) are the primary contributors and driving force behind the codebase since inception. Despite the fact that the most popular use case for libWebRTC has been web browsers, there is nothing stopping you from using it as a native library to communicate with other WebRTC peers.

Should you be using libWebRTC?

Unless you are someone particularly keen on dealing with C/C++ and the million other pain points that come with it...you might find it easier to use some other open-source projects implementing the WebRTC specs which we cover below.

The "Mediasoup" project provides a high level JavaScript/TypeScript interface to the WebRTC APIs. The core logic of this project is implemented in C++/Rust. Consider taking a look at the project if you want an easy-to-use library instead of the low-level libWebRTC APIs.

A notable project to mention is the Pion/webrtc project which has a Golang implementation of the WebRTC API. Of course, we should mention the rust port WebRTC.rs. Let’s keep all the rustaceans happy too!

If "ease of use" is not particularly important, and you are looking for cross-platform support, libWebRTC is definitely the safest bet to make native WebRTC clients that are compatible with browser clients from Chrome, Firefox, and other browsers.

Getting started with libWebRTC

Now that we have talked about the WebRTC space, lets go over how you can start working on the libWebRTC codebase.

Cloning the repo

You can clone the source directly using git clone <https://webrtc.googlesource.com/src>, but it is recommended that you install Google's Depot Tools which includes a set of tools for working with the project.

Start by cloning depot tools:

git clone <https://chromium.googlesource.com/chromium/tools/depot_tools.git>

The repository's root folder has executable tools that we'll use later, so it can be convenient to add it to your PATH variable:

export PATH=/path/to/depot_tools:$PATH

Next, we can get the libWebRTC source by running:

mkdir webrtc-checkout

cd webrtc-checkout

fetch --nohooks webrtc

gclient sync

Now, the src folder should have the entire source code from libWebRTC's main branch!

Building the source

Chromium, libwebRTC, and other Google projects use the ninja build system.

But before we can use ninja to build the project, we must use another tool, gn.

gn uses the configuration options in the BUILD.gn file to generate ninja build files for multiple operating systems, executables, shared libraries, etc.

The webrtc.GNI file contains some boolean flags to control which components of the project are built, which you can mess with if you wish to do so. For now, we will just stick to the defaults.

To generate ninja's build files, run

gn gen out/Default

This will by default generate files for a DEBUG build that has extra data that we will use later while debugging an example on the repo.

Finally, to start the build using ninja, run:

ninja -C out/Default

LibWebrtc’s Threading Model

The first step to working on any codebase is to locate the entry point of the code. Since libWebRTC is a library, it's hard to narrow it down to a single entry point. But the majority of codebases will start by using the CreatePeerConnectionFactory function in the codebase which lets you easily create a WebRTC PeerConnection. The first three parameters of this function are essential to understand the entire webRTC codebase. These three thread objects are instances of the rtc::Thread class, and are used globally in the entire codebase to allocate different tasks on separate threads to help avoid processing tasks from bottlenecking network calls and external APIs:

- network_thread: This thread is responsible for writing the actual media packets flowing through your peer connection, and performing minimal processing tasks.

- worker_thread: The most resource-intensive of the three, this thread deals with processing the individual video/audio streams received/sent to peers.

- signaling_thread: This thread is where all the external API functions of the peer_connection class run. All external callbacks also run on this thread, therefore it is essential for users of the library to not block inside callbacks, since this will block the whole signaling thread.

Throughout the codebase, libWebRTC uses the RTC_DCHECK_RUN_ON macro to assert that function calls are running on the correct threads. This is also super helpful for someone reading the codebase to know which thread each function runs on.

LibWebRTC's peer_connection Example

The best way to get your hands dirty with libWebRTC is by using the peer_connection example available in the src/examples/peerconnection directory of the project. If you followed the steps previously covered in the “Building the source” section, the example should already have been built according to the default rules in the webrtc.gni file.

Note: As of the date of writing this article, we are using the commit (bdf25670973611ae0c2562a43922e6120edab070). Some of the information covered in this section might get outdated in the future.

The architecture of the example

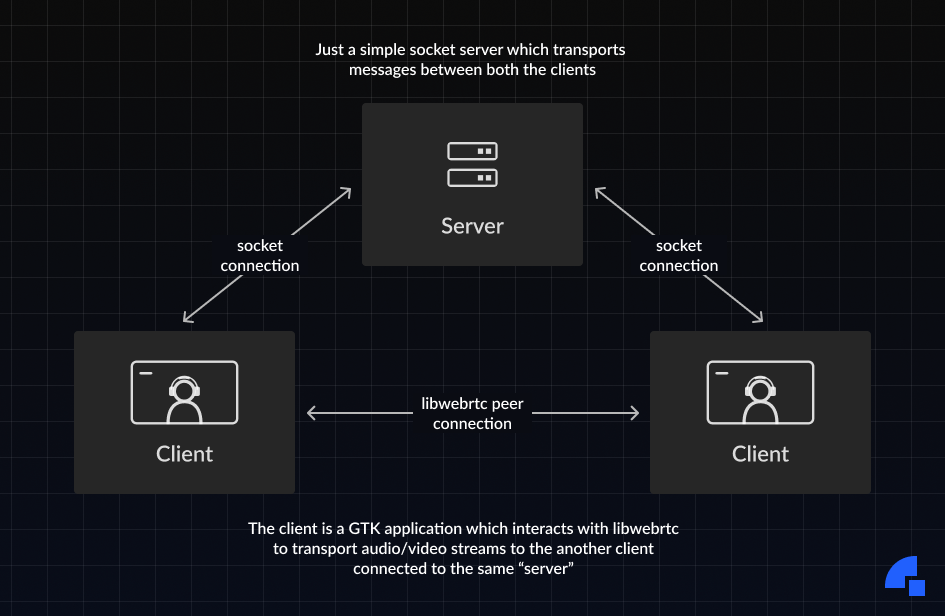

The example outlines how to set up a simple peer connection to send a video stream from one client to another client and display it on the receiving end on top of a GTK window:

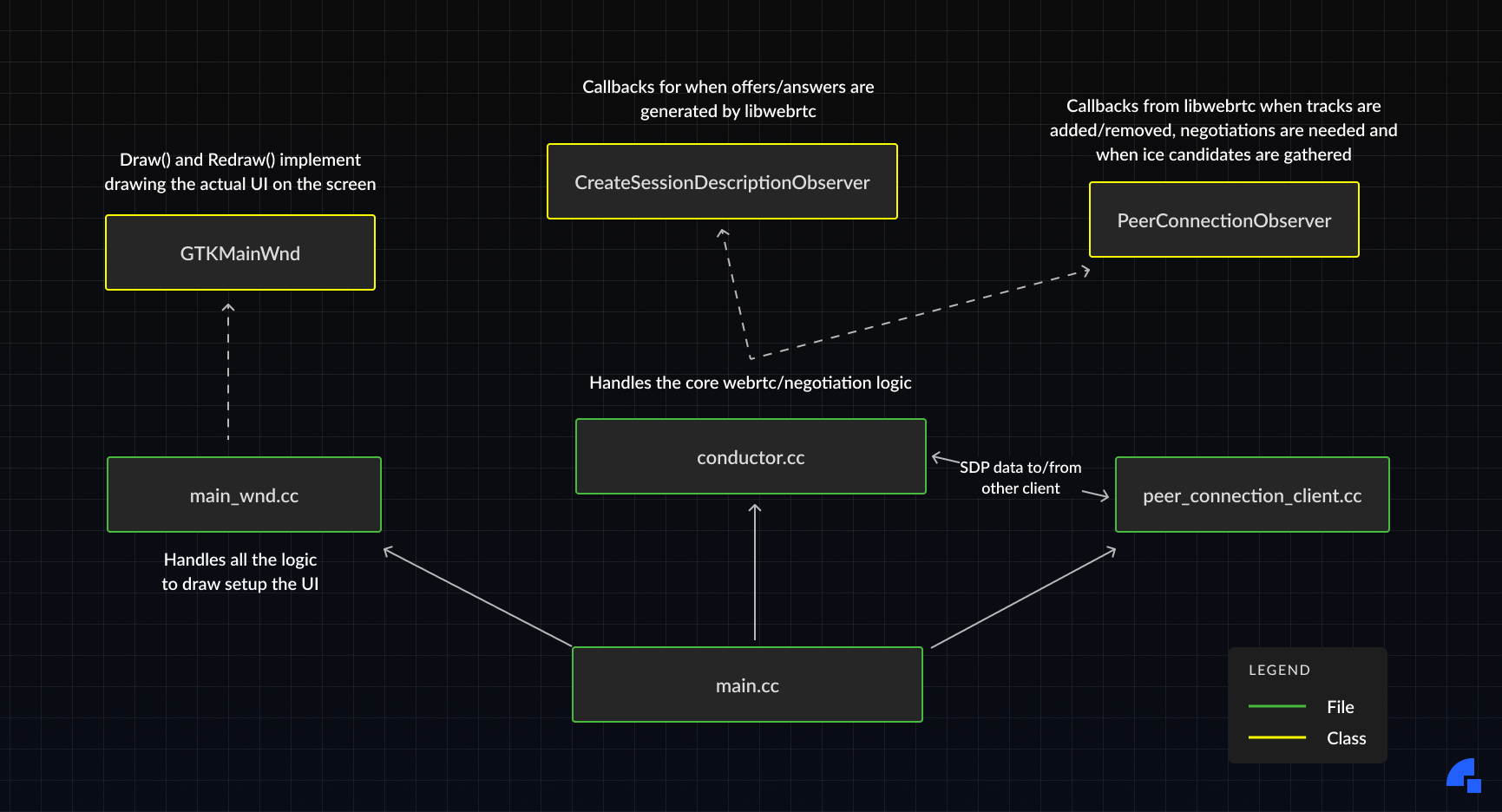

As we can see in the above diagram, there are two components in this example: the client and the server. In this blog post, we will concentrate on the client code as the server logic is irrelevant to LibWebRTC itself and is just a simple socket server that routes traffic between the clients. The architecture of the client application is as follows:

Running the code

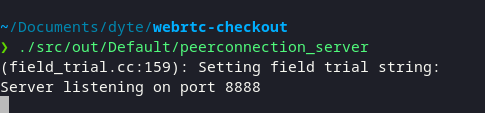

In the rest of this guide, we'll assume that you are using a Linux machine. To run the example, first, we need to start the server process. We can run the binary we previously built by running:

./src/out/Default/peerconnection_server

This should start up the socket server on port 8888:

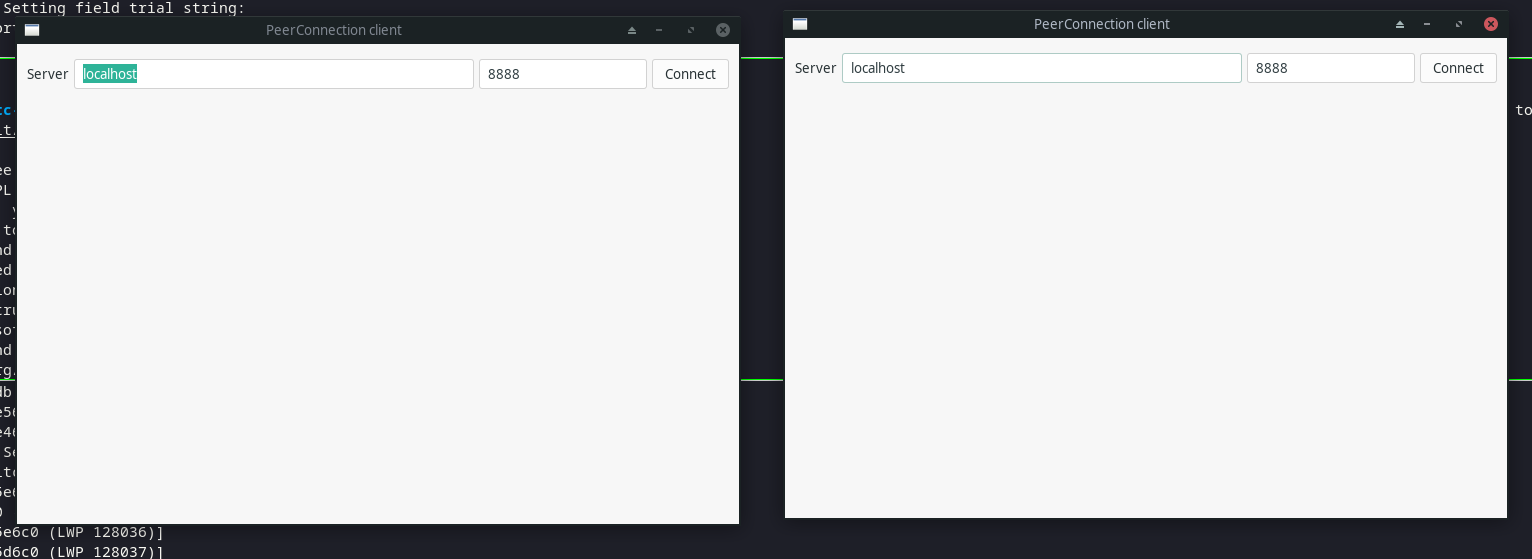

Now, spin up two more terminal windows and run the client application on each of them. It will be useful to attach these windows to gdb . This will help us debug errors when we make changes to the codebase.

gdb ./src/out/Default/peerconnection_client

r

If the clients start up correctly, you should see two GTK windows popup.

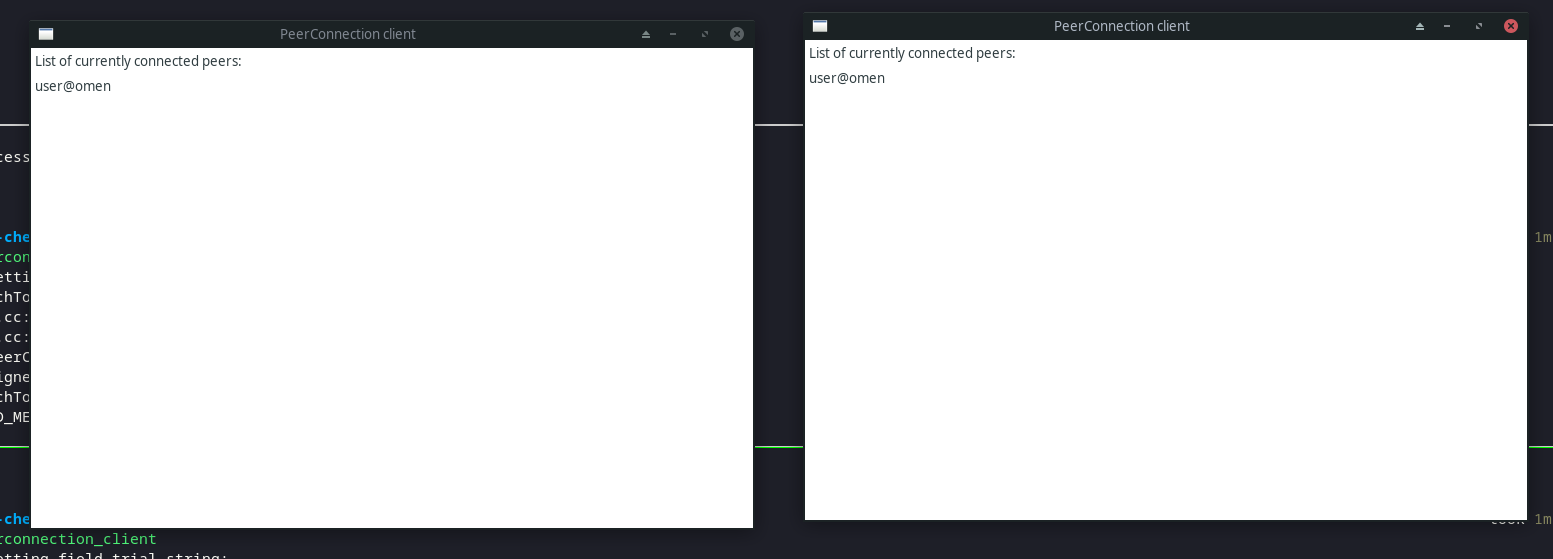

Click on Connect on both of these windows, and now you should see the list of peers connected on both windows.

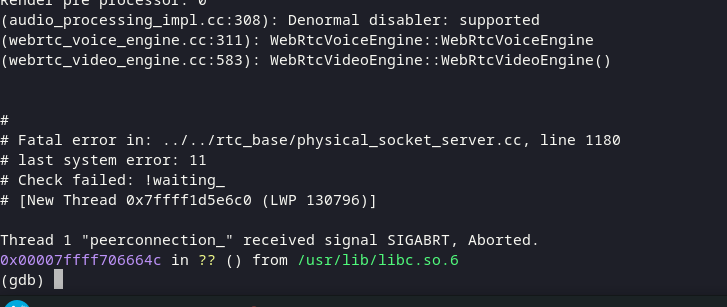

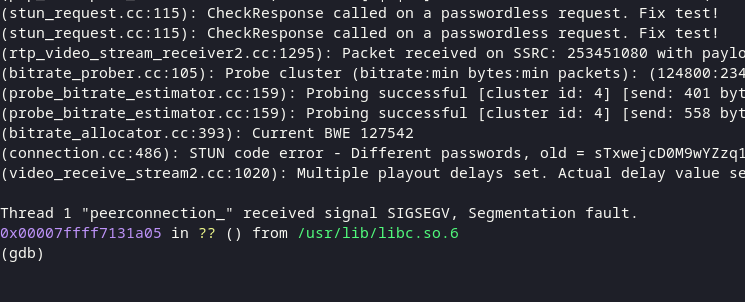

Now, click on the peer on any one of the windows. This should set up a WebRTC connection that streams video from that window to the other window! But at the time of writing this article, this seems to fail with the following error: 😞

Let’s try debugging why this error happens.

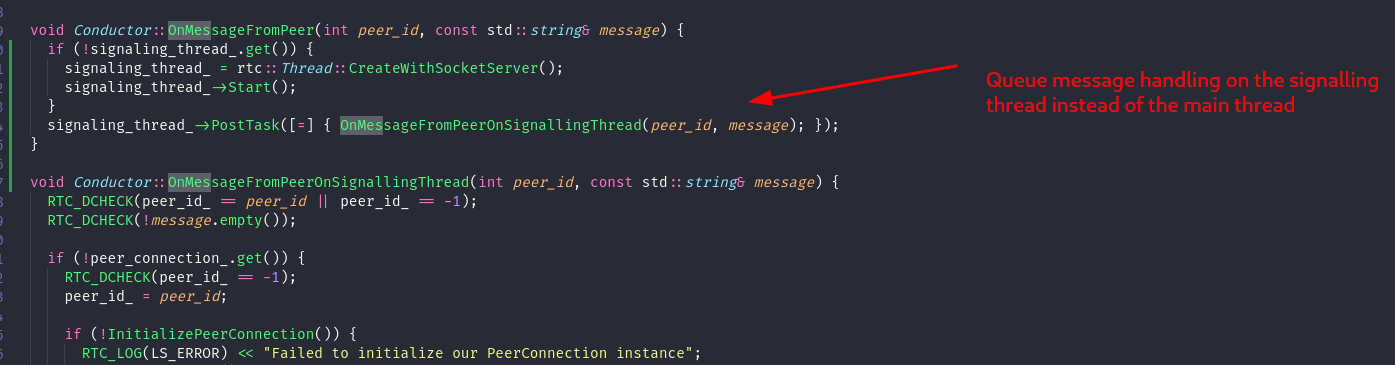

Debugging/Fixing the client binary crash #1 (Race condition in thread management) :

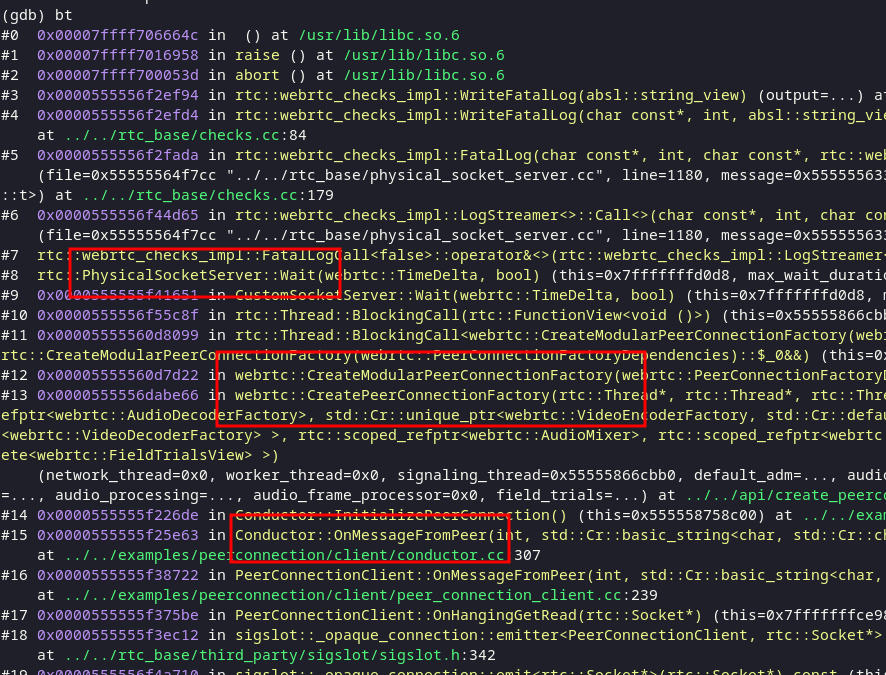

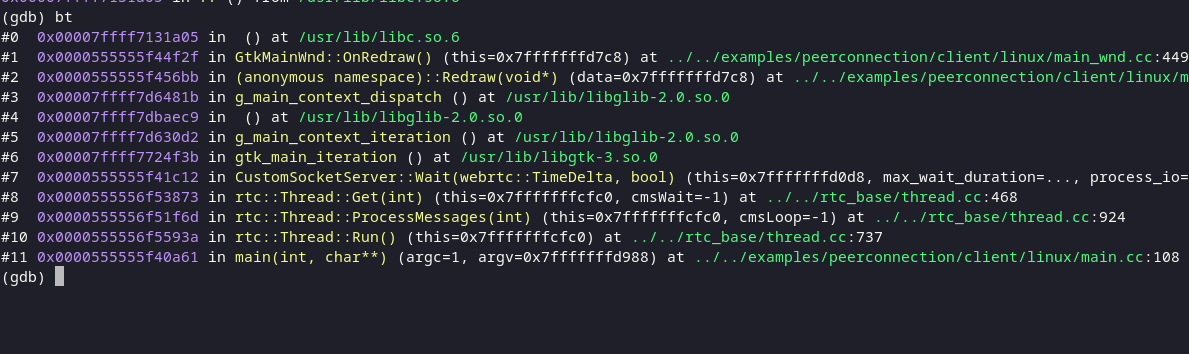

We can run bt on our GDB window to view the call stack when the application crashed and see that the following functions were called:

- PhysicalSocketServer::Wait() is invoked in the main thread of the client, which blocks until we get a socket message.

- On clicking the peer on the interface, a socket message is received which triggers OnMessageFromPeer.

- This triggers the creation of a new Peer connection, which seems to call PhysicalSocketServer::Wait() recursively again…

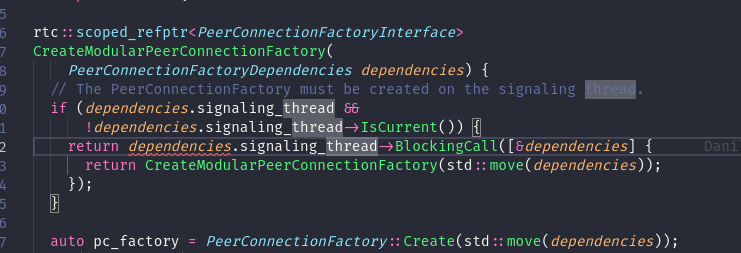

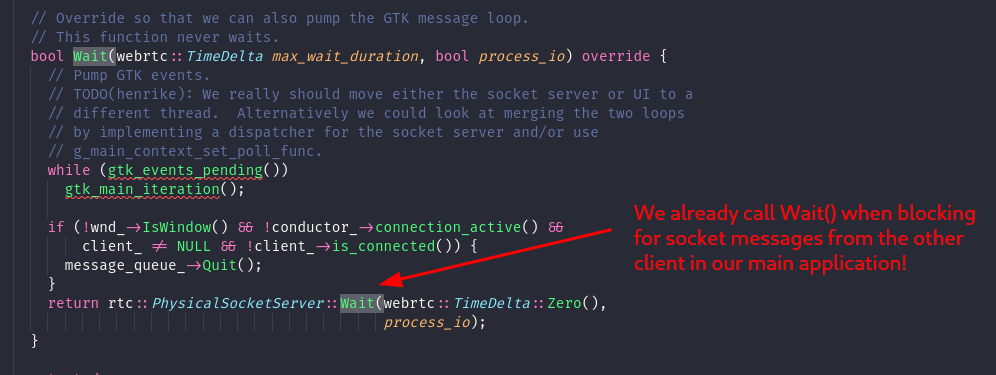

On debugging the code, we found that this happens because of a caveat in the Threading Model we covered before. The thread where we invoke the CreatePeerConnectionFactory is actually the main thread of our client application. The constructor for the factory has a check which makes sure that all function calls run on the signaling thread. But, since we invoked the constructor from a different thread, it tried to queue a call on the signaling thread and blocked the main thread until the function call was completed on the former.

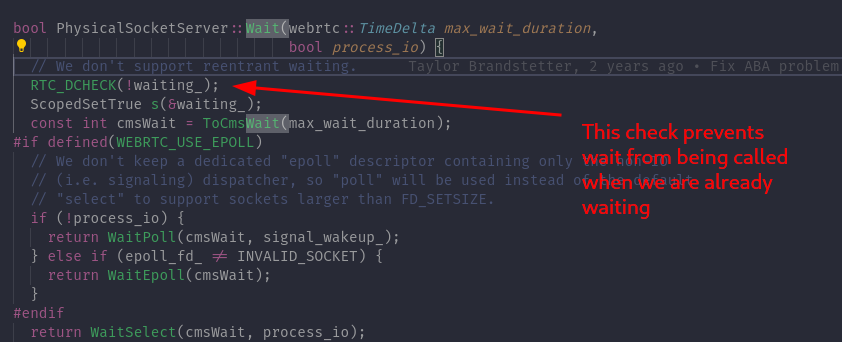

This causes Wait() to be called on our main application’s thread recursively, and the rtc::Thread class doesn’t allow Wait() to be called twice in this manner:

We can prevent this issue by simply queuing the function call on the signaling thread instead of synchronously calling it on the main thread of our application:

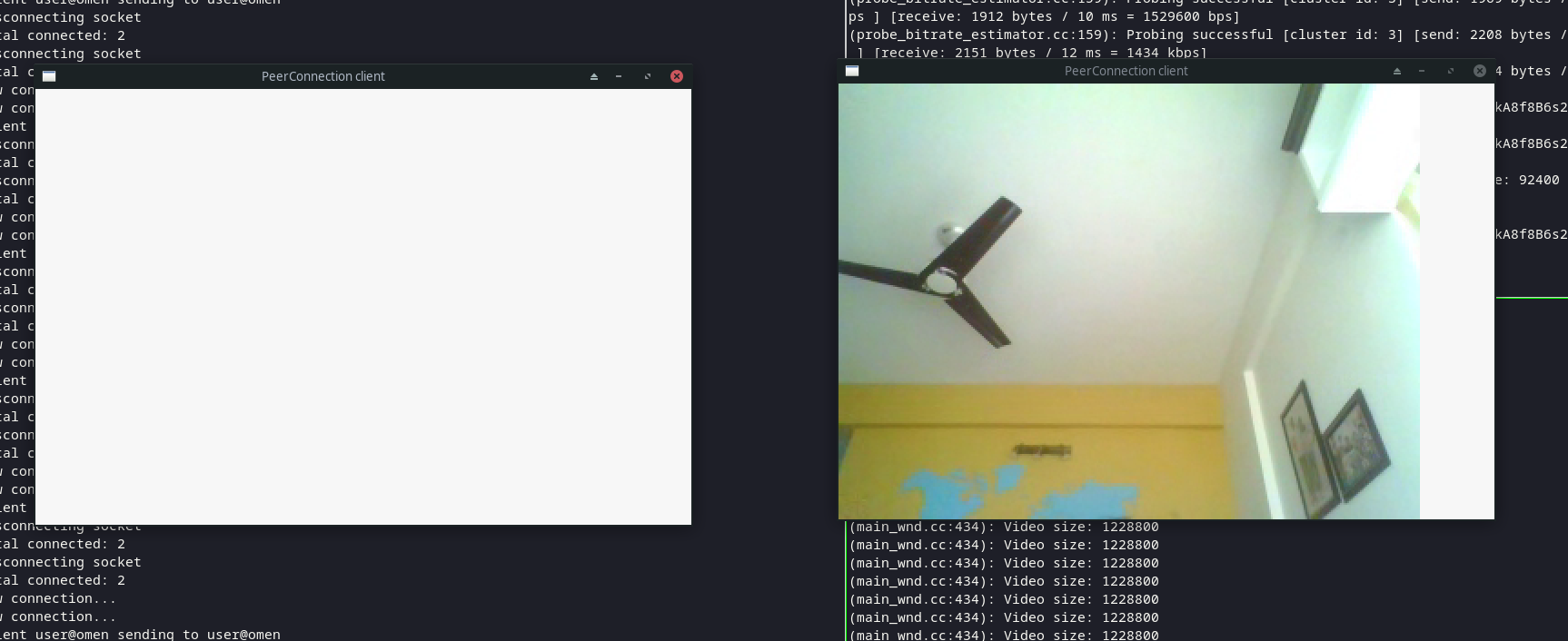

Here is a patch on github gist with the fixes, you can use this to apply the fixes on your local machine. Now, when we rebuild the files and run the code, the video stream shows up correctly!

This works for a small duration, but after a while, it appears that the video stream gets stuck on the client application on the receiving end, and now it crashes with a different segmentation fault error:

Let's look into why this happens.

Debugging/Fixing the client binary crash #2 (Buffer overflow in Video Renderer):

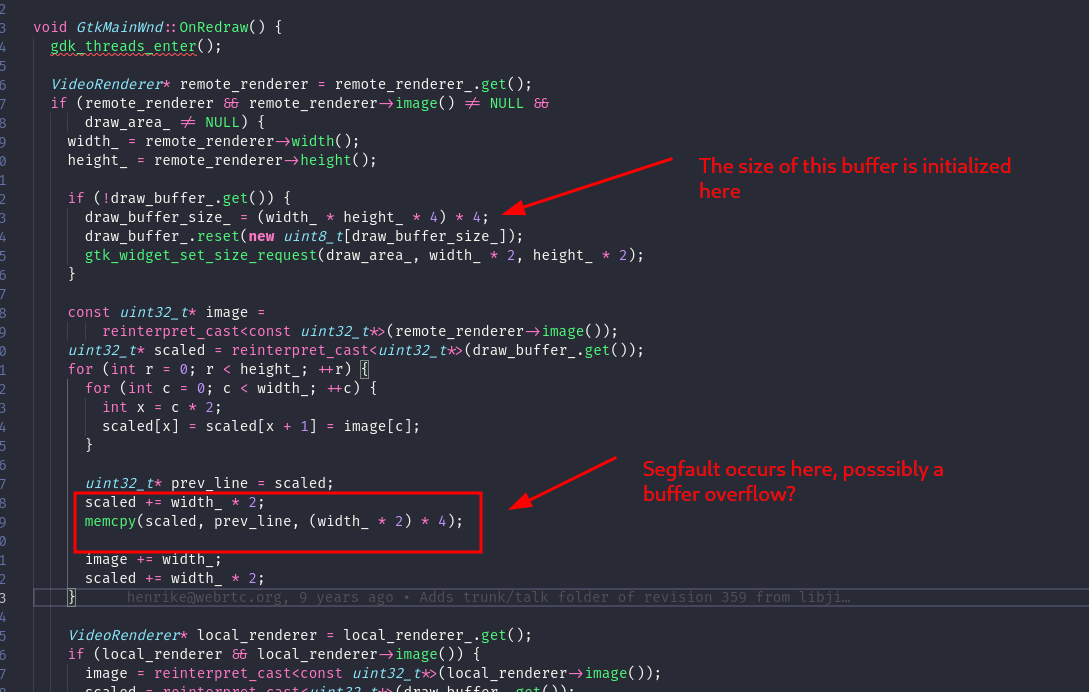

On viewing the call stack, we noticed that the crash occurs in the Redraw() function in the main_wnd.cc file:

On analyzing OnRedraw(), we found that the function is responsible for transferring the video of the peer from the remote_renderer to the draw_buffer. In the process, the code seems to be scaling the width of the source video by 2x, by duplicating adjacent pixels on the same row.

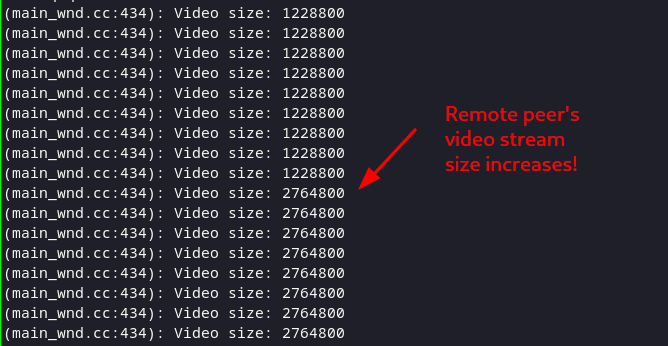

Since the memcpy is leading to a segfault after a duration, it is possible that the size of the source video is increasing to a larger size after a while, and since we initialize the draw_buffer just once, we ended up overflowing its bounds as it still has a smaller size. We can confirm this by adding some log lines in the code to log the width and height of the remote_renderer’s video :

RTC_LOG(LS_INFO) << "Video size: " << size;

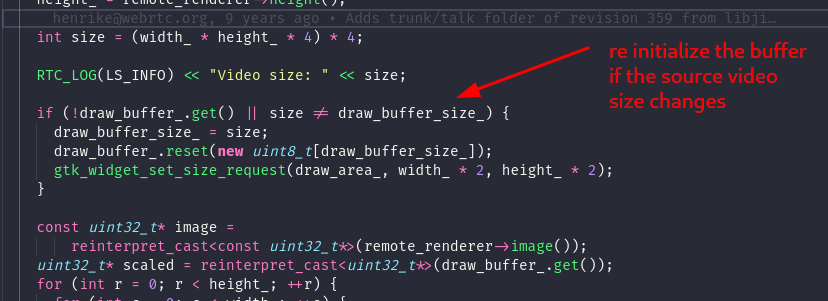

We can fix this bug by re-initializing the draw buffer each time the size of the remote_renderer’s video changes, as outlined in this patch:

Now the video stream should render correctly for an indefinite period of time!

Conclusion

Congratulation, you reached the end of this article! You should now have a basic understanding of libWebRTC, and how to work with the project. If you are interested in contributing, you can head over to webrtc’s bug tracker and try your hands at squashing some bugs 😉

Try Dyte if you don't want to deal with the hassle of managing your own peer-to-peer connections!

If you haven't heard of Dyte yet, go to https://dyte.io to learn how our SDKs and libraries revolutionize the live video and voice-calling experience. Don't just take our word for it; try it for yourself! Dyte offers free 10,000 minutes every month to get you started quickly.If you have any questions or simply want to chat with us, please contact us through support or visit our developer community forum. Looking forward to it!