In today's fast-paced digital landscape, gathering valuable insights from your audience is crucial for making informed decisions. Traditional surveys have limitations, often causing respondents to drop out due to lengthy forms or slow-loading pages. This is where async survey tools come to the rescue. These innovative tools leverage asynchronous communication to create a more engaging and efficient survey-taking experience. In this blog post, we'll explore the world of async survey tools, shedding light on what they are and why you should consider building one.

Why build an async survey tool?

Asynchronous survey tools offer a refreshing data collection approach, revolutionizing how we interact with respondents. Here are some compelling reasons why you should consider embarking on the journey of building your very own async survey tool:

- Enhanced user experience: Traditional surveys can be tedious, leading to survey fatigue and dropouts. Async survey tools allow respondents to answer questions independently, reducing survey abandonment rates and ensuring a positive user experience.

- Increased response rates: By accommodating respondents' schedules and allowing them to participate when convenient, async surveys are more likely to garner higher response rates than their synchronous counterparts.

- Global reach: Async surveys transcend time zones and geographical boundaries. They empower you to collect data from a diverse global audience, making it ideal for research with broader demographics.

- Data quality: Respondents can provide well-thought-out responses with asynchronous surveys, leading to higher-quality data. This is especially valuable when conducting in-depth research or gathering detailed feedback.

- Flexibility and customization: Building your async survey tool gives you complete control over the design, structure, and features. You can tailor it to meet the specific needs of your research, ensuring it aligns perfectly with your objectives.

- Seamless integration: Integrating your async survey tool with other data collection and analysis tools is more straightforward than ever, enabling you to streamline your research process.

- Competitive advantage: In a world where innovation is vital, having your async survey tool sets you apart from competitors. It demonstrates your commitment to delivering a superior user experience and gathering accurate data.

In the following sections of this blog post, we'll delve deeper into the technical aspects of building an asynchronous survey tool, exploring key features, design considerations, and best practices to ensure success in your survey endeavors.

Before you start

- This guide is written assuming you have basic HTML, CSS, and JS knowledge, along with React.

- You will need an API Key and Organisation ID from dev.dyte.io to perform operations with Dyte's REST APIs. Check out its documentation at docs.dyte.io/api. To know more about getting started and learning basic concepts about Dyte, read this guide: docs.dyte.io/getting-started.

Application flow

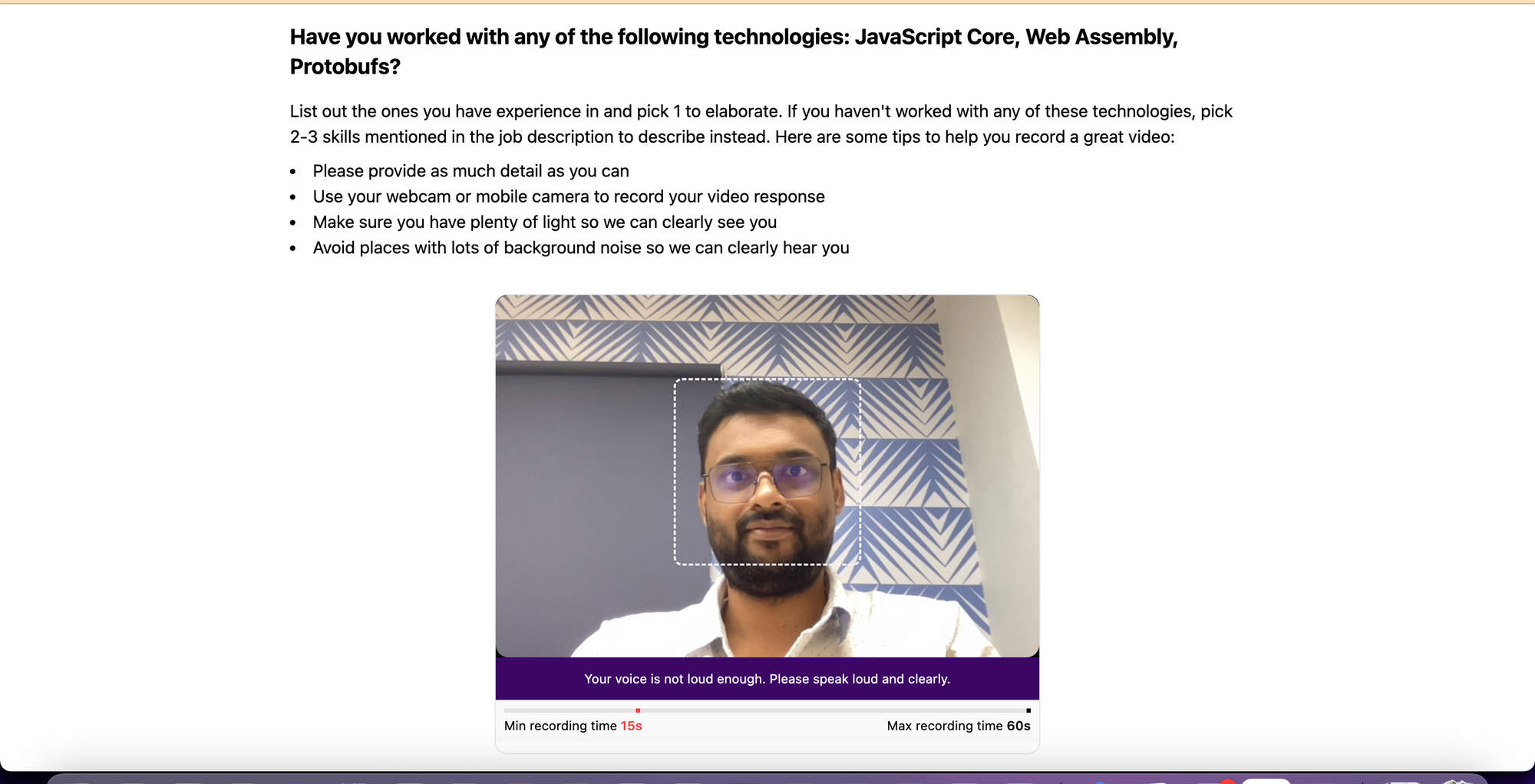

User is taken to a screen where they are asked a question. To answer, they have to start the recording. A minimum duration of 15 seconds would be required for the recording and a maximum of 60 seconds, after which the recording will stop automatically, and the survey will be completed. The user will be shown visual guidelines to center their face in the video and told to speak up if their volume is low.

Understanding the code

Follow along with the code walkthrough as you read through the source here.

In the source, we've set up a React app with Vite, and this is the main <App /> component that automatically initializes and joins a Dyte meeting.

export default function Meeting() {

const [meeting, initMeeting] = useDyteClient();

useEffect(() => {

// use "light" theme

provideDyteDesignSystem(document.body, {

theme: 'light',

});

const searchParams = new URLSearchParams(window.location.search);

// for testing we get authToken from URL search params

// but in prod, you would get this as a response

// from one of your backend APIs

const authToken = searchParams.get('authToken');

if (!authToken) {

alert(

"An authToken wasn't passed, please pass an authToken in the URL query to join a meeting.",

);

return;

}

initClient({

authToken,

}).then((meeting) => {

// automatically join room

meeting?.joinRoom();

});

}, []);

return (

<DyteProvider

value={meeting}

fallback={

<div className="w-full h-full flex flex-col gap-4 place-items-center justify-center">

<DyteSpinner className="w-14 h-14 text-blue-500" />

<p className="text-xl font-semibold">Starting Dyte Video Survey</p>

</div>

}

>

<Recorder />

</DyteProvider>

);

}

We're using the useDyteClient() hook to initialize a meeting object via the initClient() method. It accepts the authToken value returned by the Add Participant API.

We then use the <DyteProvider /> component and pass the meeting object to it that can be consumed by custom hooks. We also provided a fallback UI to render until the meeting object is initialized.

If you are unfamiliar with generating the authToken, check out our 'Getting Started' guide.

Now, let's move on to the main logic in the <Recorder /> component.

function Recorder() {

const { meeting } = useDyteMeeting();

// timestamp value from `meeting` object is stored

const [timestamp, setTimestamp] = useState<Date>();

// global states values emitted from components is stored

const [UIStates, setUIStates] = useState<any>({});

// derived from `timestamp` value

const [duration, setDuration] = useState(0);

// state to disable recording button as per logic

const [recordingDisabled, setRecordingDisabled] = useState(false);

// error feedback states to be shown to the user

const [errors, dispatchError] = useReducer(errorReducer, []);

// custom hook to calculate brightness and silence from

// video and audio tracks

useBrightnessAndSilenceDetector(dispatchError);

useEffect(() => {

// calculate duration from recording timestamp

if (timestamp) {

const interval = setInterval(() => {

const duration = getElapsedDuration(timestamp);

setDuration(duration);

}, 500);

return () => {

clearInterval(interval);

};

}

}, [timestamp]);

useEffect(() => {

const onRecordingUpdate = (state: string) => {

switch (state) {

case 'RECORDING':

setTimestamp(new Date());

break;

case 'STOPPING':

setTimestamp(undefined);

break;

}

};

meeting.recording.addListener('recordingUpdate', onRecordingUpdate);

return () => {

meeting.recording.removeListener('recordingUpdate', onRecordingUpdate);

};

}, []);

useEffect(() => {

// stop recording when you reach max duration of 60 seconds

if (duration >= 60) {

meeting.recording.stop();

setRecordingDisabled(false);

}

}, [duration]);

return (

<div className="w-full h-full flex place-items-center justify-center p-4 flex-col">

<div className="max-w-4xl pb-8">

<h3 className="text-xl font-bold mb-4">

Have you worked with any of the following technologies: JavaScript

Core, Web Assembly, Protobufs?{' '}

</h3>

<div className="mb-2">

List out the ones you have experience in and pick 1 to elaborate. If

you haven't worked with any of these technologies, pick 2-3 skills

mentioned in the job description to describe instead. Here are some

tips to help you record a great video:

</div>

<li>Please provide as much detail as you can</li>

<li>Use your webcam or mobile camera to record your video response</li>

<li>Make sure you have plenty of light so we can clearly see you</li>

<li>

Avoid places with lots of background noise so we can clearly hear you

</li>

</div>

<div className="flex flex-col w-full max-w-lg border rounded-xl overflow-clip">

<div className="relative">

<DyteParticipantTile

participant={meeting.self}

className="w-full h-auto rounded-none aspect-[3/2] bg-zinc-300"

/>

<p className="text-white bg-purple-950 p-3 text-xs text-center">

{/* Show okay message, or last error message */}

{errors.length === 0

? messages['ok']

: messages[errors[errors.length - 1]]}

</p>

{/* Show placement container only when recording hasn't started */}

{!timestamp && (

<div className="absolute w-44 z-50 left-1/2 -translate-x-1/2 top-1/2 -translate-y-28 aspect-square border-2 border-dashed border-pink-50 rounded-lg" />

)}

</div>

{/* Duration indicator */}

<Duration duration={duration} />

<div className="flex items-center justify-center p-2">

<DyteRecordingToggle

meeting={meeting}

disabled={(timestamp && duration <= 15) || recordingDisabled}

/>

<DyteSettingsToggle

onDyteStateUpdate={(e) => setUIStates(e.detail)}

/>

</div>

</div>

<DyteDialogManager

states={UIStates}

meeting={meeting}

onDyteStateUpdate={(e) => setUIStates(e.detail)}

/>

</div>

);

}

Breaking down the code:

- The

timestampstate value is set from themeetingrecording API in auseEffectcontaining therecordingUpdateevent listeners. UIStatesstate value gets updated whenever components like<DyteDialogManager />or<DyteSettingsToggle />emit global UI state updates.- We derive the

durationfrom thetimestampvalue in auseEffect. - The state

recordingDisabledis set as per the logic we defined before. - To render the UI, we use the

DyteParticipantTile,DyteSettingsToggle,DyteRecordingToggleandDyteDialogManagercomponents from Dyte's React UI Kit. Note thatDyteSettingsToggleemits a state whenever you toggle it, and theDyteDialogManagershows theDyteSettingscomponent accordingly. - Note how we disable the

DyteRecordingTogglebutton as per thedurationstate. - We also render a custom

Durationcomponent showing the progress of the recording duration along with our constraints. - The

useBrightnessAndSilenceDetector()hook updates theerrorsstate that gets shown to the user.

The messages object contains a pre-defined list of strings for each error state:

const messages = {

ok: 'Ensure your head and shoulders are in shot. Hit record when you are ready.',

not_bright:

'You seem to be in a dark room, please try turning on the lights.',

not_loud: 'Your voice is not loud enough. Please speak loud and clearly.',

};

Coming to the brightness and silence detector hook useBrightnessAndSilenceDetector(). Its code looks like this:

function useBrightnessAndSilenceDetector(

dispatchError: Dispatch<Parameters<typeof errorReducer>[1]>,

) {

const { meeting } = useDyteMeeting();

const videoEnabled = useDyteSelector((m) => m.self.videoEnabled);

const audioEnabled = useDyteSelector((m) => m.self.audioEnabled);

useEffect(() => {

const { audioTrack } = meeting.self;

if (!audioTrack || !audioEnabled) return;

const stream = new MediaStream();

stream.addTrack(audioTrack);

const audioContext = new AudioContext();

audioContext.resume();

const analyserNode = audioContext.createAnalyser();

analyserNode.fftSize = 2048;

const micSource = audioContext.createMediaStreamSource(stream);

micSource.connect(analyserNode);

const bufferLength = 2048;

const dataArray = new Float32Array(bufferLength);

const silenceThreshold = 0.05;

const segmentLength = 1024;

function getRMS(

dataArray: Float32Array,

startIndex: number,

endIndex: number,

) {

let sum = 0;

for (let i = startIndex; i < endIndex; i++) {

sum += dataArray[i] * dataArray[i];

}

const mean = sum / (endIndex - startIndex);

const rms = Math.sqrt(mean);

return rms;

}

function detectSilence() {

analyserNode.getFloatTimeDomainData(dataArray);

const numSegments = Math.floor(bufferLength / segmentLength);

for (let i = 0; i < numSegments; i++) {

const startIndex = i * segmentLength;

const endIndex = (i + 1) * segmentLength;

const rms = getRMS(dataArray, startIndex, endIndex);

if (rms > silenceThreshold) {

// Detected non-silence in this segment

return false;

}

}

// Detected silence

return true;

}

const interval = setInterval(() => {

const isSilent = detectSilence();

if (isSilent) {

dispatchError({ type: 'add', error: 'not_loud' });

} else {

dispatchError({ type: 'remove', error: 'not_loud' });

}

}, 1000);

return () => {

clearInterval(interval);

dispatchError({ type: 'remove', error: 'not_loud' });

};

}, [audioEnabled]);

useEffect(() => {

if (!videoEnabled) return;

const { videoTrack } = meeting.self;

if (!videoTrack) return;

const videoStream = new MediaStream();

videoStream.addTrack(videoTrack);

const video = document.createElement('video');

video.style.width = '240px';

video.style.height = '180px';

video.muted = true;

video.srcObject = videoStream;

video.play();

const canvas = document.createElement('canvas');

canvas.width = 240;

canvas.height = 180;

const ctx = canvas.getContext('2d', { willReadFrequently: true })!;

const interval = setInterval(() => {

const brightness = getBrightness(video, canvas, ctx);

if (brightness < 0.4) {

dispatchError({ type: 'add', error: 'not_bright' });

} else {

dispatchError({ type: 'remove', error: 'not_bright' });

}

}, 1000);

return () => {

clearInterval(interval);

dispatchError({ type: 'remove', error: 'not_bright' });

};

}, [videoEnabled]);

return null;

}

It takes the audioTrack and videoTrack objects from the meeting object and then processes it to detect silence/low volume and low brightness, respectively, and dispatch the errors for our UI to show.

Finally, let's take a look at the Duration component and the ProgressBar component.

function ProgressBar({

value,

max,

}) {

return (

<div className="h-1 w-full bg-zinc-200 relative">

<div

className="max-w-[25%] h-full bg-red-500 z-10 absolute left-0 top-0"

style={{

width: Math.min((value / max) * 100, 25) + '%',

transition: 'all 0.3s',

}}

></div>

<div

className="max-w-full h-full z-0 bg-black absolute left-0 top-0"

style={{

width: Math.min((value / max) * 100, 100) + '%',

transition: 'all 0.3s',

}}

></div>

</div>

);

}

function Duration({ duration }) {

return (

<div className="flex flex-col gap-1 bg-zinc-50 p-2 text-center">

<div className="relative">

<ProgressBar value={duration} max={60} />

<div className="z-10 bg-red-500 absolute left-1/4 h-full w-1 top-0"></div>

<div className="z-10 bg-black absolute right-0 h-full w-1 top-0"></div>

</div>

<div className="text-xs flex items-center justify-between">

<div>

Min recording time{' '}

<span className="font-semibold text-red-500">15s</span>

</div>

<div>

Max recording time <span className="font-semibold">60s</span>

</div>

</div>

</div>

);

}

We wrote a custom UI with the help of TailwindCSS to render the Duration and ProgressBar UI that looks like this.

Now, the user can start answering the question after hitting the record. You can access the demo at - https://dyte-async-video-survey.vercel.app/ and the GitHub repo at - https://github.com/dyte-io/async-video-survey/blob/main/src/pages/survey.tsx.

Conclusion

With this, we have built an async survey tool, changing your data collection approach and modernizing your interactions with respondents. We have broken down the code, rigorously tested it, and fine-tuned the interface to ensure user-friendliness, making it prime and polished for deployment. So, now you can get on and elevate your survey-taking experience.

While on the subject of async tools, check out this blog we wrote on creating an async interview platform!

If you have any thoughts or feedback, please get in touch with me on LinkedIn and Twitter. Stay tuned for more related blog posts in the future!

Get better insights on leveraging Dyte's technology and discover how it can revolutionize your app's communication capabilities with its SDKs. Head over to dyte.io to learn how to start quickly on your 10,000 free minutes, which renew every month. You can reach us at support@dyte.io or ask our developer community if you have any questions.